For my final Capstone project in a specialization course on Coursera, I completed a SWOT analysis. The full assignment was to select a business or organization and conduct a SWOT analysis. Specifically, we were to choose one item identified in the weakness or threats analysis and propose a solution. The final project was to create a 10-15 minute presentation, with the idea that it would be presented to executives at the company. For my SWOT analysis, I decided to analyze Netflix.

Why I Chose Netflix

Netflix is one of the most valuable US tech companies right now. In addition to that, I have been aware of their Culture memo, first published in 2009, which emphasizes a “treat employees like adults” approach to management, including as a “keeper test” approach to retention. I really wanted to dig deeper into this. My theory was that although Netflix’s (stock) seems to flourish with this approach, my intuitive sense was that there was more to it than that.

In addition, I’ve recently come across a few articles about Netflix in the news such as one about a Netflix-turned-Twitter exec who clashed with the culture at his new workplace. This one seems to be about trying to take what works for a culture at one company and injecting it directly into another.

I was also once a Netflix DVD + streaming customer, from about 2006 to 2012. I completely quit Netflix in 2017. And Netflix has become the “N” in the list of FAANG workplaces tech workers supposedly aspire to join. Having had these experiences with the company, I was happy to find an opportunity to evaluate their business in a structured way.

I intended to include my full report here. Unfortunately, I felt there were a number of students plagiarizing other students’ work, writing suboptimal reports, or having an essay writer complete their project. For that reason, I won’t include my full report to avoid that fate. But I will include snippets from my main submissions.

The Assignment

The entire assignment was meant to be put together in six weeks, include the SWOT matrix, report, and presentation. The audience for each section was meant to be executives from the company, so everything should be written as though it were going to be presented to C-Suite executives.

An overview of the 3-part assignment is below. Following that, I have included snippets from each section.

Part 1: SWOT Matrix

A 1-page visual presentation of the SWOT analysis. (We could use a template supplied by the course, as well as our own software or tools, which is what I did.)

Part 2: Report

The report should be 7-10 pages, “(double-spaced with 12 point font and 1 inch margins)”. The report should consist of four distinct sections:

- Introduction: Introduction and content setup.

- Description: SWOT analysis. Visual should be included.

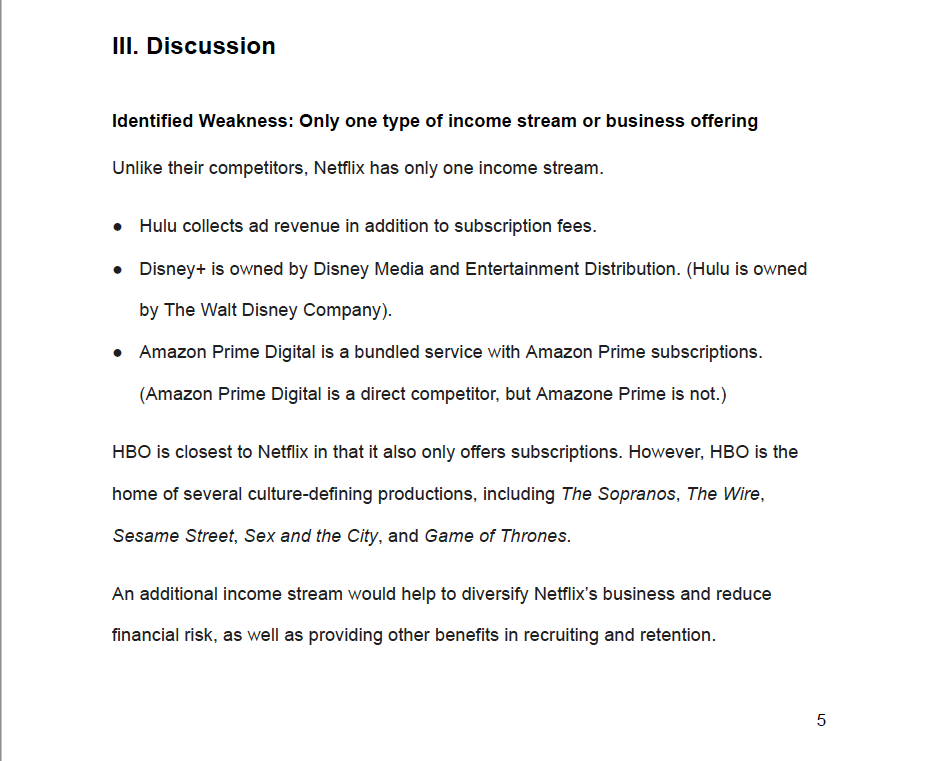

- Discussion: Select one problem identified in the SWOT analysis and propose a solution.

- Conclusions and Recommendations: Recap key findings and proposed recommendations.

Part 3: Presentation

“Create a 15-20- minute presentation to senior management…to enhance and reinforce your audience’s understanding of the most important points in your written report.”

Part 1, The Report: Weakness Identified and Proposal

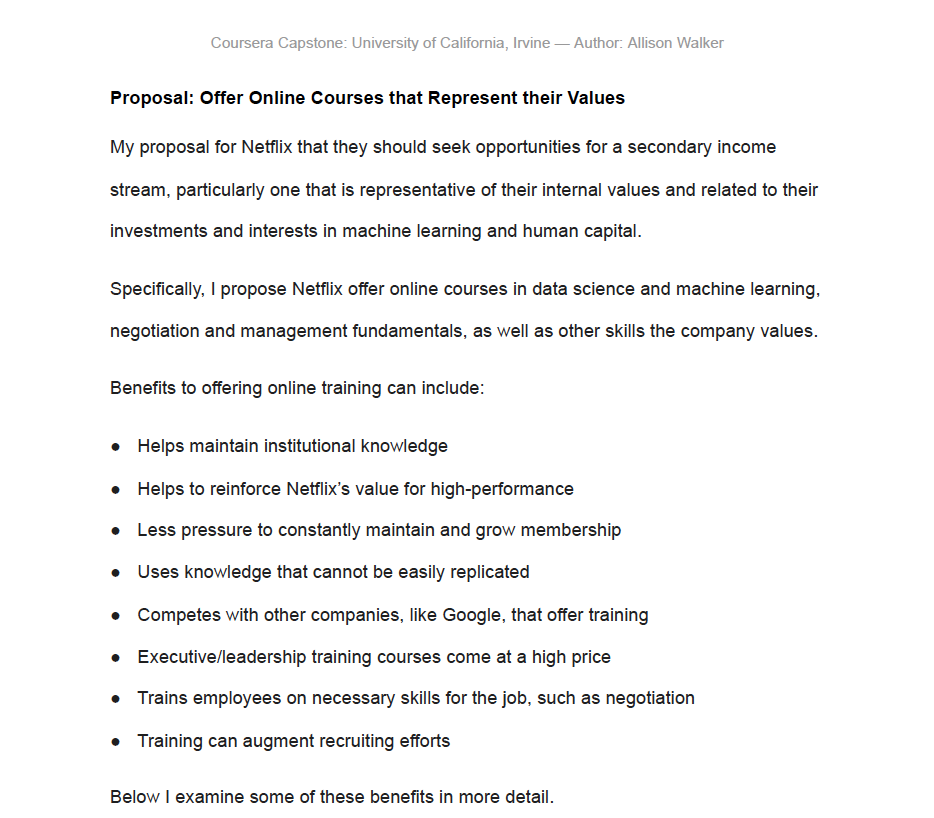

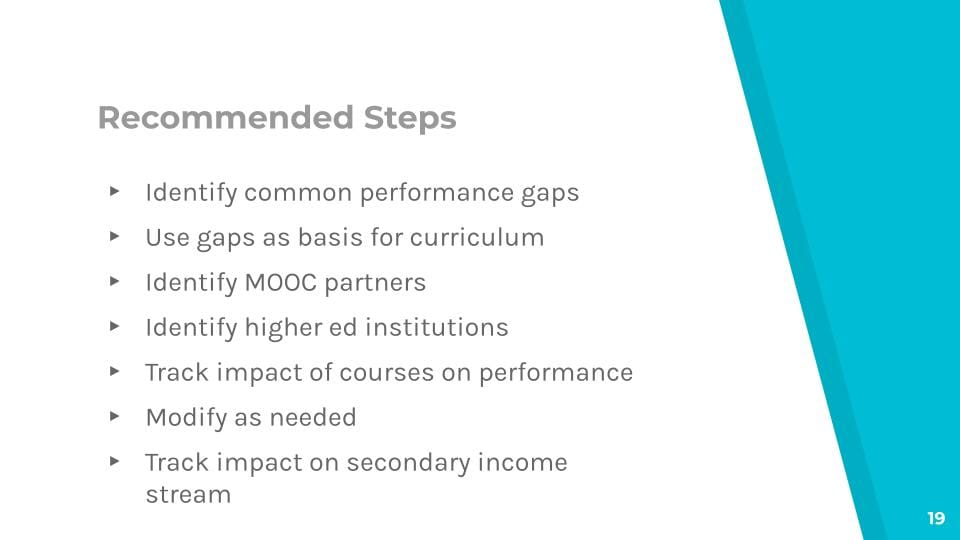

The full requirements were to write a 7-10 page, double-spaced report about your chosen company. My analysis revealed that in contrast with their competitors, they only have one income stream. I proposed offering online courses that represent their values as a way to seek secondary income.

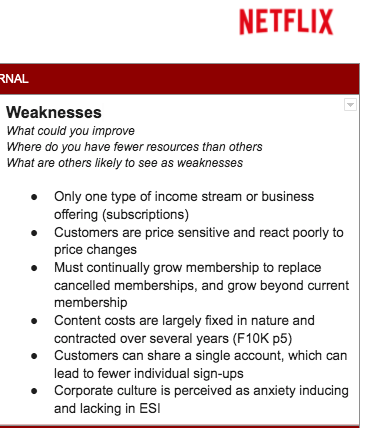

Part 2, SWOT Matrix: Weaknesses

Based on my research, I identified the following weaknesses:

Part 3, Proposal Presentation

My proposal was related to internal training. I’m only including a few slides from my presentation, which I created in Google Slides using a presentation template I’ve used in the past.

Project Outcome

Well, I really do wish I could share my final SWOT matrix, report, and presentation. I worked hard on it and I’m really happy with how it turned out.

But, as I mentioned, due to rampant plagiarism, I don’t feel comfortable putting up any work. I suspect there will be people using my work anyway.

In any case, my reviewers gave me full marks on my final submission. The rubric includes points for:

- Integrates and incorporates many practices, concepts, methods and techniques found in Career Success Specialization coursework.

- Reflects extensive use of company research to provide considerable insight into the organization.

- Demonstrates a thorough understanding of how SWOT analysis works.

- The target problem chosen is well-defined and clearly stated.

- Demonstrates considerable ability in applying a logical approach to finding a creative solution.

- Information and ideas presented are consistently and critically analyzed, synthesized and well-supported.

- The report is well-written, with exemplary use of logic, organization, flow, style, and mechanics to conform to good business English formats and practices.

- The presentation slides reflect effective use of content, structure, textual and visual graphics to convey the intended message.

I got 3 points for each rubric item. 3 is the maximum.

One person left this feedback, “This was by far the best project I have graded. Well done!” So that’s nice.

Although I kind of wish I’d selected a different font for the report, I think the best way to conclude this is to say, Yay for me! 🙂